Introduction

In the rush and hype surrounding AI adoption, one truth stands out: powerful models alone are rarely enough. Just as solid foundations in software development, project management, or helpdesk support separate great services from mediocre ones, AI systems need a proven blueprint.

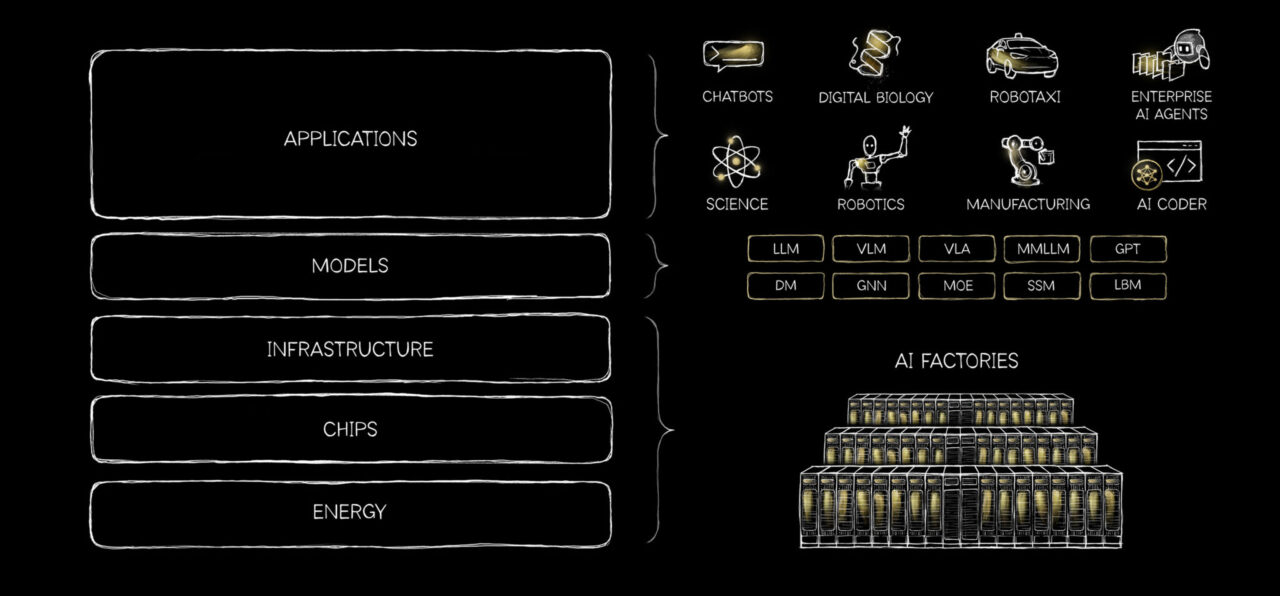

NVIDIA CEO Jensen Huang describes this reality through the metaphor of AI as a "5-layer cake", Energy, Chips, Infrastructure, Models, and Applications. Every successful AI application "pulls on every layer beneath it, all the way down to the power plant that keeps it alive" (Huang, 2026).

What is AI Architecture?

Imagine you want to build a really smart house, one that can cook meals, clean itself, entertain guests, and even learn your daily habits over time. You wouldn’t just buy a bunch of fancy gadgets (a robot vacuum, a smart fridge, voice assistants, and security cameras) and throw them all into the same room hoping they magically work together.

That’s where AI architecture comes in. AI architecture is simply the overall blueprint or master plan for how all the different pieces of an AI system fit together. It answers big questions like:

- What are the main parts we need? (For example, the brain that thinks, the memory that remembers past conversations, the tools that can search the web or send emails, and the safety checks.)

- How do these parts connect and talk to each other?

- How does information flow from start to finish?

- How do we make sure the whole system is fast enough, cheap enough to run, secure, and reliable when things go wrong?

Just like an architect draws detailed plans for a house, deciding where the kitchen, bedrooms, plumbing, and electrical wiring go, AI architecture designs the structure before anyone starts coding or training models.

A good architecture makes the system:

- Scalable: it can grow without falling apart

- Maintainable: easy to fix or improve later

- Reliable: it doesn’t break or give weird answers all the time

Without a solid architecture, even the most powerful AI models become like a pile of expensive gadgets that don’t cooperate. They might work okay in simple tests but fail in real life.

In this article, we explore orchestrating intelligence, how to design AI architectures that actually work together. We'll examine what architecture and orchestration really mean, the single most important factor in architectural decisions, and practical considerations such as cost, time, and availability. Finally, we'll view these concepts through the lens of an AI engineer, drawing parallels to the classic ETL process reimagined with intelligent agents.

What is AI Orchestration?

If AI Architecture is the blueprint for building a smart house, then AI Orchestration is the conductor directing the entire performance so everything works together beautifully.

Imagine you’ve built a fancy smart house with a robot chef, a cleaning bot, a security system, and a personal assistant. Without a conductor, the chef might keep cooking while the cleaner tries to mop the kitchen floor, total chaos!

AI orchestration is that conductor. It coordinates all the different AI parts (models, agents, tools, and memory) so they play in harmony, at the right time, and toward the same goal (IBM, 2026).

How It Makes ETL Smarter

Traditional ETL is like a fixed assembly line with rigid steps. AI orchestration upgrades it by adding intelligence:

- It uses dynamic routing, deciding the best next step based on real-time results instead of a fixed plan.

- It can run tasks in parallel, loop for improvements, or call a human when needed.

- This turns rigid pipelines into flexible, thinking workflows.

Popular frameworks like LangGraph and CrewAI offer different conducting styles, one for precise control (like a strict orchestra conductor following exact sheet music), another for team-style collaboration (like a band leader guiding musicians with defined roles).

If you're working in the Microsoft ecosystem, Microsoft Foundry acts like a professional concert hall that hosts the performance. You can bring your own conductors by integrating LangGraph or CrewAI, or use Microsoft's built-in tools like Semantic Kernel and AutoGen for managed, scalable orchestration with enterprise-grade security, monitoring, and deployment.

The Most Important Architecture Decision

When choosing your AI architecture and orchestration approach, many things matter, team skills, technology stack, and integration needs. However, one factor stands clearly above the rest:

Alignment with your specific use case, especially how predictable versus dynamic your workflows need to be, and how much control, auditability, and error-handling you require.

A simple customer support chatbot may only need lightweight routing. But a complex multi-agent research system or fully autonomous workflow demands strong state management, branching logic, and recovery mechanisms. Choosing the wrong approach is like using a sledgehammer to crack a nut, or trying to build a skyscraper with toy blocks.

This top-level alignment should guide everything else. Cost, time, and availability are critical secondary constraints that can make or break your project in the real world.

Practical Considerations

While alignment with your use case comes first, three practical factors heavily influence your final architectural decisions:

- Cost: Heavy orchestration with lots of agent handoffs and state management gives better control but can significantly increase compute usage and running costs.

- Time / Latency: More sophisticated orchestration adds extra steps and decision points, which can slow down responses. Simple routing is faster but less flexible.

- Availability & Reliability: Robust orchestration improves error recovery and uptime, but complex systems can introduce more points of failure if not designed carefully.

| Dimension | Simple System | Complex Orchestrated System |

|---|---|---|

| Cost | Low | High |

| Latency | Low | High |

| Flexibility | Low | High |

| Reliability | Medium | Depends on design quality |

There’s always a trade-off. Using heavy graph-based orchestration (like LangGraph) gives you excellent control and auditability, but it usually costs more and may increase latency. Lighter frameworks (like basic CrewAI flows) are cheaper and faster to build, but they can become brittle as your system grows more complex.

The sweet spot is finding the lightest orchestration that still meets your use case needs, without over-engineering or under-engineering the solution.

AI Engineering Through the ETL Lens

To truly understand modern AI systems, it helps to view them through a familiar engineering paradigm: ETL (Extract, Transform, Load).

Traditionally, ETL is a data integration process used to collect, clean, and move data from multiple sources into a structured system for analysis (IBM, 2026; Amazon Web Services, n.d.). While originally designed for data warehousing, this same pattern maps surprisingly well to how modern AI systems operate, especially those using orchestration and agents.

In AI engineering, we are no longer just moving data, we are moving context, decisions, and actions. The ETL model evolves from a static pipeline into a dynamic, intelligent workflow.

Extract: Gathering Context and Signals

In traditional ETL, the extract phase involves pulling raw data from multiple sources such as databases, APIs, or files into a staging area (IBM, 2026).

In AI systems, extraction becomes context gathering. This includes:

- Retrieving documents from vector databases (RAG systems)

- Calling APIs or tools (search, CRM, internal systems)

- Pulling conversation history or memory

Instead of raw data, the system extracts relevant knowledge needed to reason. The quality of this step directly impacts the intelligence of the system, poor inputs lead to poor outputs.

Transform: Reasoning and Orchestration

In ETL, transformation is where data is cleaned, validated, and reshaped to meet business requirements (IBM, 2026).

In AI, this becomes the core intelligence layer.

- LLMs perform reasoning and decision-making

- Agents collaborate, critique, or refine outputs

- Workflows branch, loop, or retry based on intermediate results

This is where orchestration frameworks like LangGraph or agent systems operate, turning static pipelines into adaptive systems. Instead of predefined transformations, AI systems dynamically decide how to process information in real time.

Much like ETL applies business rules, AI orchestration applies reasoning policies, prompts, and control logic.

Load: Delivering Outcomes and Taking Action

In traditional ETL, the load phase moves transformed data into a target system such as a data warehouse for downstream use (IBM, 2026).

In AI systems, loading becomes action and delivery.

- Returning responses to users

- Updating databases or knowledge bases

- Triggering workflows (tickets, emails, automations)

Crucially, modern orchestration adds state management and recovery, ensuring that even if part of the process fails, the system can retry, resume, or gracefully degrade instead of breaking entirely.

This transforms the “load” step from a simple data write into a reliable execution layer.

Key Points

- AI systems mirror ETL, but operate on context and decisions instead of just data

- Transformation becomes reasoning, making it the most critical and complex stage

- Orchestration is the evolution of ETL, enabling dynamic, resilient, and intelligent workflows

References

- Amazon Web Services. (n.d.). "What is ETL (Extract, Transform, Load)?" Amazon Web Services, Inc.. Retrieved March 29, 2026, from https://aws.amazon.com/what-is/etl/

- Huang, J. (2026, March 13). "AI is a 5-Layer Cake." NVIDIA Blog. Retrieved March 29, 2026, from https://blogs.nvidia.com/blog/ai-5-layer-cake/

- IBM. (2026, February 10). "What is AI Orchestration?" IBM Think. Retrieved March 29, 2026, from https://www.ibm.com/think/topics/ai-orchestration

- IBM. (2026, March 19). "What is ETL (Extract, Transform, Load)?" IBM Think. Retrieved March 29, 2026, from https://www.ibm.com/think/topics/etl

- Microsoft. (2026, January 15). "What is Microsoft Foundry?" Microsoft Azure. Retrieved March 29, 2026, from https://azure.microsoft.com/en-us/products/ai-foundry